Introduction

Mission-driven organizations face immense pressure to produce more educational materials, campaign updates, and donor reports with smaller budgets and limited staff. Because of this resource strain, nonprofits that spend $20 monthly on AI tools can boost productivity by 20% and gain the equivalent of an extra work day. While this efficiency looks tempting on paper, organizations that deploy algorithms without proper oversight often produce generic and robotic messaging that alienates communities. When nonprofits adopt automated content creation without a formal governance structure, they risk publishing biased information, exposing sensitive beneficiary data, and losing the authentic storytelling that inspires people to take action.

Furthermore, unstructured text diminishes search visibility because answer engines penalize mass-produced content and reward verified expertise. A practical roadmap helps organizations balance necessary technological efficiency with genuine human authenticity. Organizations protect their digital presence and community relationships when they move beyond unstructured experimentation and implement a formal strategy that guides how teams use modern drafting tools.

Automation Paradox in Organizational Communications

Modern drafting tools offer significant time savings, but language models also introduce the risk of publishing unedited text that alienates supporters. According to the Adecco Group, AI saves workers one hour each day, and this allows professionals to dedicate more time to creative strategic thinking activities. This efficiency seems appealing to overworked editorial teams. Organizations often rush to adopt automated content creation tools to handle emails, campaign updates, and social media posts.

However, this rapid AI content automation adoption causes a paradox. Machines generate text quickly, but they lack the emotional intelligence necessary to engage communities authentically. Unedited outputs often read as mechanical or generic, and this damages the connection with the audience. Research from Koinsights shows that 52% of consumers disengage from the brand entirely when they suspect AI-generated content. The publication of machine-generated text undermines institutional credibility. Editors must balance algorithmic speed with human precision to maintain engagement. Organizations can explore a digital marketing guide to understand this balance better. Only human editors inject the necessary confidence into the final narrative. Organizations establish clear rules for technology usage to support these human editors.

Transition From Experimentation to Formal AI Governance

These clear rules require organizations to view algorithms as a governance issue rather than a simple technological challenge. Many teams experiment with AI content automation, and they do not establish proper guidelines. Data from Whole Whale reveals that 82% of organizations deploy AI tools while less than 10% maintain formal governance policies. This lack of oversight creates significant vulnerabilities. Staff members do not always realize when they feed sensitive beneficiary information into public language models because they lack documented policies. BDO USA warns that organizations risk exposing donor data if algorithmic tools lack strong security protocols. Leadership teams must draft formal policies that prioritize the safety of community information.

A thorough governance framework mandates the following actions:

-

Staff members anonymize all beneficiary data before they enter prompts into any language model.

-

The policy prohibits the use of confidential financial records in public generative tools.

-

Editors review all external communications before publication.

-

Organizations establish an approved list of secure algorithmic tools for staff usage.

These rules protect vulnerable populations and allow teams to benefit from technology. A specialized digital marketing agency often helps teams draft these essential frameworks. The establishment of these boundaries leads directly to better drafting practices.

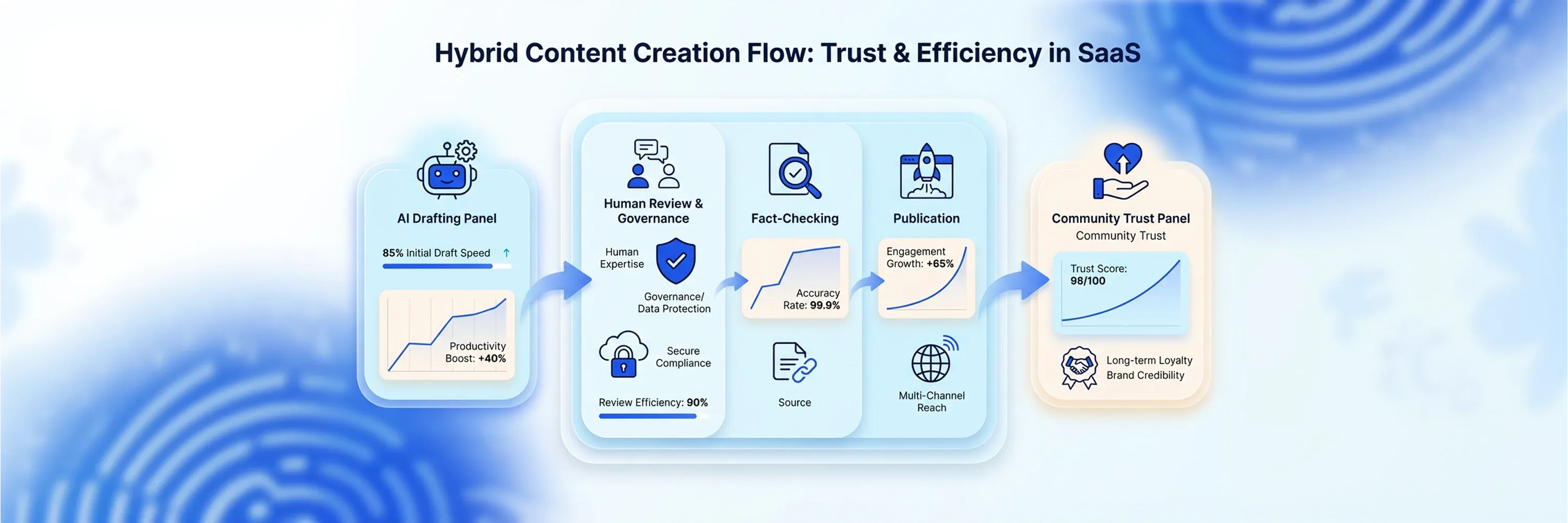

Essential Hybrid Workflow for Automated Content Creation

These better drafting practices rely on a hybrid workflow where the most effective editorial teams start their drafting process with machines and finish with human editors. This hybrid approach resolves the tension between technological speed and authentic narratives. Algorithms generate outlines, suggest headlines, and structure initial drafts well. Humans add emotion, verify facts, and ensure cultural context. Teams produce generic text that modern search engines frequently penalize when they rely solely on algorithms. Answer engines prioritize authoritative and expert-verified information over mass-produced material. According to Averi AI, hybrid AI-human content ranks 34% higher than pure AI-generated unedited content.

A combination of machine efficiency and human oversight guarantees the soundness of the final message. Editors review the algorithmic output, rewrite mechanical sentences, and inject specific organizational experiences into the narrative. This collaborative method increases the reliability of the published materials. Professionals can study an AI content creation workflow to understand this process better. This partnership between humans and machines relies on a specific division of labor. An appropriate workload division maximizes productivity and maintains quality.

Application of Seventy-Thirty Rule

An effective workload division requires organizations to allocate seventy percent of drafting to algorithms and reserve thirty percent for human refinement. The machine avoids blank page syndrome. It generates the initial structure, compiles basic information, and formats the text. The human editor takes this foundation and spends thirty percent of the time on narrative refinements. The editor adds specific case studies, adjusts the tone, and aligns the message with organizational values.

This structured approach produces ethical AI content that resonates with readers. Data from Averi AI demonstrates that a hybrid workflow achieves 59% faster content creation and better engagement than pure AI. This specific ratio provides teams with the certainty they need to meet publishing deadlines and maintain accuracy.

Prevention of Algorithmic Hallucinations

To maintain this accuracy, human editors manually verify all facts, statistics, and historical references that language models generate. Algorithms operate when they predict the next logical word in a sequence, and they frequently invent plausible but false information. Systematic reviews published in the Journal of Medical Internet Research indicate that ChatGPT hallucination rates reached 39.6% for GPT-3.5 and 28.6% for GPT-4. The publication of these fabricated data points damages an organization's credibility.

Editors must approach every machine-generated draft with skepticism. They must cross-reference dates, names, and financial figures against verified internal documents. Staff members must read algorithmic outputs with the conviction that errors exist within the text. Thorough manual verification prevents embarrassing retractions and protects the integrity of the institution. Editors focus on writing style improvements after they verify the facts.

Publication of Ethical AI Content for Digital Campaigns

These writing style improvements require human editors to remove predictable linguistic patterns and mechanical phrases before they publish campaign materials. Language models tend to overuse specific transition words and construct monotonous sentences. Editors break up these predictable structures when they vary sentence length and eliminate repetitive vocabulary. They replace generic statements with authentic stories from the field. This refinement process keeps campaigns grounded in organizational values. Research from NPTechForGood stresses that organizations must intentionally establish clear governance policies anchored in mission and organizational values.

Transparent communication about technology usage builds trust with donors and community members. Organizations demonstrate accountability when they admit they use algorithms for initial drafting and rely on humans for final editing. This honest approach strengthens community relationships and ensures search engines recognize the human expertise behind the message.

Visibility Through Generative Engine Optimization

While traditional search engines recognize human expertise, emerging answer engines evaluate institutional credibility differently. These new platforms prioritize structured data and verifiable facts over generic paragraphs. When organizations rely entirely on automated content creation without human oversight, they produce unstructured text that modern platforms ignore. Answer engines scan the internet for specific expertise and properly formatted information.

They reward material that includes clear citations, distinct headings, and accurate numbers. Because these algorithms want to provide direct answers, they favor text that demonstrates high quality and thorough research.

Why Human Oversight Matters More Than Automated Fundraising Content

Professionals adapt their writing strategies to maintain visibility during this shift. If professionals want their ethical AI content to surface in these new environments, they must include concrete data points and expert quotes. According to Averi AI, including statistics boosts AI citation likelihood by up to 115% for lower-ranked material. Machine algorithms look for these factual anchors to validate the information. Human editors add these critical statistics during the review process. Professionals ensure their narratives appear in direct AI answers when they incorporate these factual anchors.

These workers often study Search Engine Optimization strategies and read resources about specific optimization strategies to format their published materials properly. This focused approach connects organizations with audiences actively searching for specific causes.

Community Trust Through Specific Transparency

When organizations connect with these active audiences, they build stronger community relationships by speaking openly about their drafting processes. Contributors do not want to read generic disclaimers at the bottom of newsletters because these vague statements fail to meet expectations. People want to know exactly how teams manage sensitive information and draft their communications. Explicit details about AI content automation offer contributors peace of mind regarding how the institution uses their funds.

Honest communication about technology usage prevents misunderstandings and demonstrates accountability. Audiences feel comfortable when they understand the internal editorial workflow because human editors oversee the final messaging. Research from Martech Edge shows that 57% of consumers trust brands more when artificial intelligence operates as part of a transparent experience. Organizations produce ethical AI content when they share specific details about their operational guidelines.

Teams explicitly share these procedural steps with their communities:

These concrete steps prove that the institution values authenticity over mass production. This openness protects the institutional reputation and sets a positive example for other organizations.

Quality Control Connection to Financial Impact

Alongside a strong reputation, rigorous editorial standards directly influence an organization's financial success. Contributors evaluate the authenticity of campaigns before they decide to send their money. If an organization relies exclusively on AI content automation without human oversight, the resulting mass-produced appeals fail to inspire action.

Contributors treat authentic community stories as a refuge from the constant noise of generic internet marketing. People feel safe when they support institutions that communicate with empathy and deep cultural understanding.

Why Human Editors Are Essential for Building Trust and Increasing Donations

Human editors refine every machine-generated draft because financial contributions rely on emotional connections. These professionals demonstrate respect for the audience and build long-term relationships through thoughtful communication strategies. According to Spotfund, 78% of people give more generously when they feel understood by the organization. Automated content creation systems cannot replicate this deep organizational understanding on their own. Instead, human editors turn a mechanical request for funds into a compelling narrative that resonates with personal values. Organizations maximize their financial impact only when they prioritize editorial quality over raw output volume.

Teams protect their brand reputation and inspire greater generosity from their communities when they implement strict quality control measures. This commitment to authentic storytelling secures the vital resources needed to advance the institutional mission.

Conclusion

To summarize the major points, organizations secure these vital resources when they use automated content creation strictly as a drafting engine rather than a replacement for genuine human connection and storytelling. They establish strong oversight to protect their missions from reputational damage and prevent the publication of generic messaging that alienates supporters. In the future, these organizations will secure visibility in evolving answer engines because they balance machine efficiency with authentic narratives. A formal algorithmic governance policy ensures they remain protected today. Exploring a specialized AI Content Generator helps staff members understand how structured templates improve workflows and align with clear editorial standards.