Introduction

Mission-driven organizations want to expand their digital reach, but they operate with limited resources. For decades, these entities relied on human writers to share impact stories, mobilize volunteers, and secure funding. Today, leadership teams seek new technological solutions to maintain output and avoid overhead increases.

While 92% of nonprofits use AI, many organizations struggle with generic outputs that alienate their supporters. They adopt artificial intelligence because they hope for immediate efficiency gains, but they often produce mechanical narratives that fail to inspire action. Donors and volunteers connect with missions through genuine human emotion rather than algorithmic text. If leadership teams deploy AI content creation improperly, they might erode the foundational trust that sustains their operations. Organizations can integrate artificial intelligence responsibly to expand their digital visibility and preserve the authentic human connection that drives ongoing support.

Authenticity Paradox

While organizations want to preserve this authentic human connection, many businesses view automated storytelling as a cheap way to produce hundreds of reports and updates. But if these businesses replace human writers entirely with algorithms, they lose the emotional core that makes their message matter. Algorithms process data and assemble words, but they do not experience daily struggles or human triumphs. These generic narratives shift public attention away from the actual impact and direct it toward the artificial tone of the message. This mechanical approach actively damages brand credibility.

Recent research proves that readers detect this lack of human connection. According to a University of Kansas study, readers trust news less when AI is involved, even if they do not fully understand the technology's extent. When readers open a trusted newsletter and sense an algorithmic voice, they question the authenticity of the entire operation. They wonder if the shared stories are genuine.

Over time, this skepticism erodes the customer relationships that secure long-term loyalty. Businesses rely on genuine human experiences to inspire action. A machine's output cannot replicate human passion. In the end, a complete shift to artificial intelligence creates a barrier between the brand and its customers.

AI-Ready Voice Profile

Because generic algorithms create this barrier and produce forgettable text, businesses must build an AI-ready voice profile before they begin AI content creation. This profile acts as a blueprint that teaches the language model how to sound like the brand. Content teams achieve this when they gather their most successful historical newsletters, articles, and reports. They feed these documents into the system and instruct the algorithm to analyze the tone, vocabulary, and sentence structures. This training process changes automated storytelling from a robotic exercise into a reliable extension of the brand's identity.

Teams must document specific elements in their voice profile so that algorithms do not revert to standard machine language:

-

Core values that drive the daily operations.

-

Specific terminology used to describe customers.

-

Topics and phrases the brand refuses to use.

-

Examples of emotional appeals that resonated with past readers.

Even with a detailed profile, technology cannot replace the human element of writing. Experts at Lighthouse Counsel note that AI-generated content lacks the human touch, and businesses should not rely entirely on it for customer outreach. The machine provides a strong starting point, but human writers must inject the assured empathy that connects with readers. Teams establish a clear voice profile and give the algorithm a framework to produce drafts that sound authentic. This preparation allows staff members to spend their time on the emotional details that inspire loyalty.

70/30 AI Content Creation Workflow

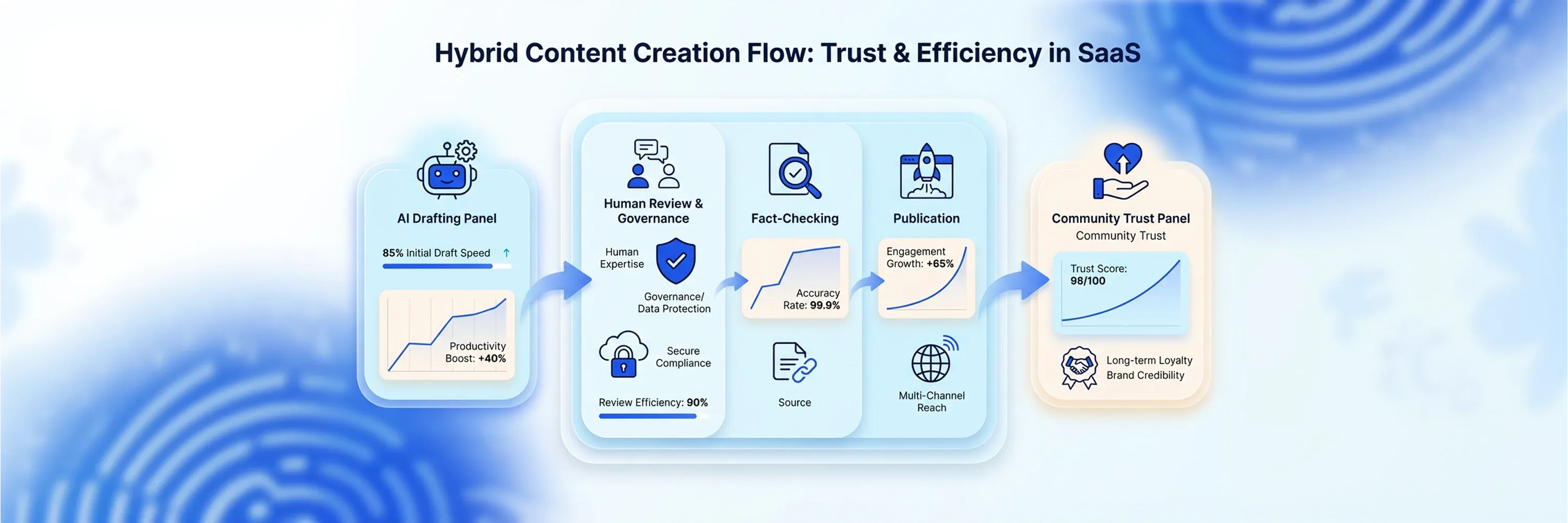

Because staff members must focus on these emotional details, businesses need a structured framework to prevent algorithms from taking over the entire writing process. The most effective ai content workflows divide the labor strategically between machines and humans. This division ensures that technology handles the tedious tasks while human writers protect the emotional core of the message. The industry calls this approach the 70/30 rule.

Under this framework, language models manage the heavy lifting of the initial creation phases. Business leaders suggest that generative tools handle 50 to 70 percent of the initial work because the rest of the work differentiates the brand's value. Algorithms are capable tools that process vast amounts of raw data. They easily summarize complex data reports and generate initial content outlines. According to digital strategists, the best workflow practice balances AI at 70% on research and drafting, and reserves human effort for the editing stages.

The other 30% belongs exclusively to human judgment. After the machine generates a draft, staff members review the text to ensure it aligns with the core values. Human editors inject emotional resonance into the stories of customers and employees. They also perform confident fact-checking to verify that every statistic and quote is accurate. If teams leave fact-checking to the algorithm, they risk publishing false information that ruins their public reputation. Proper ai content workflows guarantee that machines speed up the writing process without compromising the factual accuracy and human warmth that readers expect.

Generative Engine Optimization for Nonprofits

While proper workflows secure factual accuracy and human warmth, organizations face new challenges because search engines now use generative artificial intelligence to answer user questions directly. Traditional optimization focused on ranking high on standard result pages, but Generative Engine Optimization focuses on becoming a cited source within AI-generated answers. Organizations adapt their digital storytelling to this new reality.

When language models build responses, they look for authoritative information across the entire web. They do not just pull from the most popular websites. A recent analysis shows that 52% of AI citations come from sources outside the top 100 Google results. This data proves that smaller organizations have a certain path to visibility if they structure their information correctly. Language models prioritize clarity and context over domain authority. Nonprofits capture these citations and scale AI content creation by publishing well-structured case studies that answer specific questions. Algorithms pull properly formatted data to answer donor inquiries. Proper digital content optimization requires specific steps to feed these generative engines.

Automated Storytelling Structure for AI Search

Organizations follow these steps because language models scan websites differently than human readers do. They rely on precise formatting to understand relationships between different pieces of information. Proper document structure helps these algorithms parse and retrieve details about a specific program or campaign. Organizations use descriptive headings to break their articles into logical sections. A clear heading signals exactly what information the paragraph contains. Writers implement schema markup to categorize their data. schema markup acts as a hidden label that tells the search engine whether the text describes a fundraising event, a volunteer opportunity, or a financial report.

When algorithms easily categorize information, they frequently cite that source in their generated answers. Automated storytelling fails when organizations publish massive blocks of text without navigational markers. Clean structure gives the machine exactly what it needs to construct an accurate response.

Statistics for Impact Validation

Along with a clean structure, algorithms prioritize numerical data when they construct answers for users. Factual claims carry more weight in generative search than emotional narratives do. Organizations increase their digital visibility when they embed proprietary research and program metrics directly into their articles. Instead of stating that a community program succeeded, organizations publish the exact number of meals served or students tutored. Researchers at Princeton University found that adding statistics improves visibility by up to 40%.

Language models use these data points to build confident responses. A food bank that publishes an annual hunger report with original statistics becomes a primary source for algorithms. Regular numerical updates ensure that the search engine selects the organization's data when users ask questions about local community needs.

Mentions Outside Traditional Search

While these numerical updates improve internal data, generative engines also look beyond an organization's proprietary website to determine authority. They analyze training datasets that include public forums, news articles, and social platforms. External mentions signal to the algorithm that the organization matters to the public. When an environmental group receives coverage in a local newspaper or gets discussed in a community forum, the algorithm records those connections.

A recent analysis of search behavior shows that sites with heavy Reddit activity average 7 AI citations per 100 queries. These off-page signals act as a validated endorsement of the organization's expertise. Teams share their case studies on external platforms and encourage community discussions. Algorithms trust organizations that generate conversations across the internet, and they reward those organizations with prominent citations in generated answers.

Ethical Guardrails

While algorithms reward these digital conversations, this technology introduces new risks to the storytelling process. Algorithms hallucinate facts, expose sensitive data, or introduce unintended biases into published content. Organizations establish dependable policies to govern how staff members use generative tools. These ethical guardrails ensure that the technology serves the mission safely. If a charity feeds raw beneficiary interviews into a public language model, the system might leak that private information into the public domain. Management implements strict data governance rules to prevent this information leak.

Organizations establish effective AI content workflows when they define exactly how staff interacts with generative tools. These procedures require human editors to check every draft for algorithmic bias and factual errors. Furthermore, audiences expect honesty about technological assistance. A 2025 study found that 92% of surveyed respondents deem it important to plainly disclose AI use. Transparency builds trust with human readers and satisfies algorithm quality signals.

Teams follow specific steps to keep vulnerable communities protected during AI content creation:

-

Teams anonymize all personal data before they feed transcripts or notes into any language model.

-

Editors review generated drafts to ensure algorithms do not apply stereotypes to specific demographic groups.

-

Writers add a clear disclaimer to the bottom of published articles to indicate artificial intelligence assistance.

-

Organizations store all proprietary research and donor data on secure and private servers rather than public platforms.

These practices guarantee that the organization scales its digital presence and maintains its moral obligations.

Storytelling ROI Measurement

Once these ethical practices secure the organization's moral obligations, leadership teams use reliable methods to measure how technological investments affect their operations. Basic engagement metrics like page views and social media likes no longer provide enough insight. Organizations track how automated storytelling directly influences donor behavior and volunteer recruitment. A sturdy measurement strategy connects digital output to real-world outcomes. When a foundation publishes a grant report, the executive team tracks whether that report led to new funding applications.

Tracking AI-Driven Discovery

Organizations track referral traffic from generative search engines to measure this Return on Investment. Analytics platforms show when users arrive at a website after they click a citation in an AI-generated answer. Teams monitor these recommendation rates to see which case studies perform best in algorithmic searches. They then adjust their AI content workflows to produce more of that specific material. The financial community already expects these technological investments to yield results. A recent industry report indicates that 91% of survey respondents expect to see positive impact from AI within three years.

Proving Impact Through Conversions

This expectation forces organizations to prove their efficiency. Teams set up conversion tracking to monitor how many visitors sign up to volunteer or make a donation after they read an AI-assisted article. They compare these conversion rates against older and manually written content. If the new workflow maintains or increases donations while it reduces production time, the organization has a proven model for sustainable growth. These specific metrics ensure the technology provides actual value to the mission.

Conclusion

Because these specific metrics prove the technology's actual value, teams maintain a strategic balance between automated generation and human refinement to scale digital operations effectively. Organizations use ethical frameworks and structured processes to protect stakeholder trust and expand their reach. They also organize their narratives better through structured data, just as technologies like Pinecone's vector database improve information retrieval. As search algorithms evolve, organizations will increasingly rely on authentic narratives to secure visibility in algorithmic responses. Leaders take the next step by auditing current content processes, adopting AI content creation systems that amplify organizational purpose, and implementing clear editorial standards to guarantee that technology serves the mission and inspires future supporters.