Introduction

Digital discovery has fundamentally changed, and this requires organizations to adapt. For decades, internet users navigated through lists of blue links to find information, research causes, and connect with non-governmental initiatives. Today, generative response models synthesize data and deliver complete answers directly to the user. This shift means that traditional search visibility no longer guarantees discovery. In fact, more than 80% of Google searches end without a click to an external website.

When users receive immediate answers, they rarely navigate away from the results page. This reality changes generative engine optimization from a technical tactic into a governance priority. Adapting AI and content marketing strategies determines whether an organization's expertise remains visible or fades into algorithmic obscurity. If systems cannot parse, verify, and trust an organization's digital footprint, that organization essentially disappears from the modern internet. Organizations need to restructure their digital architecture. This article details how to navigate this landscape and align knowledge distribution with the strict requirements of machine extraction.

Great Separation of Search Traffic

The strict requirements of machine extraction force organizations to reconsider top search rankings that they once relied on to attract supporters and drive digital visibility. They optimized their websites for specific keywords and expected a steady stream of visitors. This traditional model doesn't guarantee discovery anymore because generative response models now answer user queries directly.

A recent analysis reveals that only 38% of citations come from pages that rank in the top 10 of traditional search results. A massive disconnect exists between ranking well and appearing as a trusted source in generated answers.

Zero-Click Search Is Changing Donor Discovery

Organizations must recognize this shift and adapt their digital infrastructure. They realize that high organic traffic metrics obscure the reality that zero-click searches dominate the user experience. Because users get immediate answers and do not click external links, organizations lose their primary channel for donor discovery. To fix this, professionals must understand how algorithms extract information. This shift forces organizations to abandon old tactics.

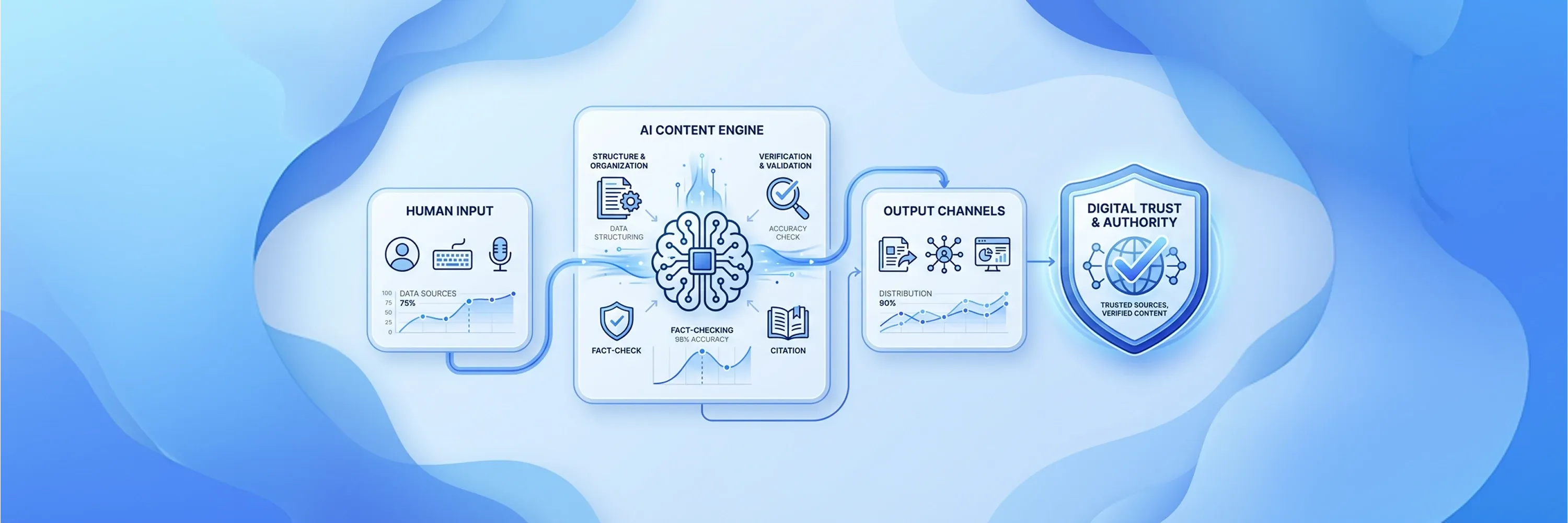

The integration of AI and content marketing bridges the gap between human storytelling and machine extraction. Organizations need to restructure their digital assets so that AI search engines can process their mission-driven initiatives. When organizations optimize for algorithmic environments, they build digital footprints that intelligent systems understand and cite. This structural adaptation ensures that charitable groups remain discoverable to the audiences they want to reach.

Redefinition of Trust for Mission-Driven Organizations

After charitable groups become discoverable through structural adaptation, intelligent systems evaluate their credibility and select sources for responses using a specific mathematical framework. They don't read mission statements and feel inspired to share an organization's work. Instead, they weigh specific signals to determine which sources deserve visibility. Research shows that ChatGPT weighs authority at 40%, content quality at 35%, and platform trust at 25% when it selects its sources. This formula dictates how charities must present their impact data.

Commercial metrics focus on sales and conversions, but charitable organizations must build a different kind of trust. They must highlight beneficiary outcomes and operational transparency to pass algorithmic scrutiny. This approach forms the foundation of modern digital discovery. Organizations need to publish concrete impact data that intelligent systems can verify across multiple channels. A specialized digital approach becomes critical for charities that want to maintain relevance. Machine learning models reward transparency and penalize vague claims about making a difference.

Construction of Authority Signals

Because machine learning models reward transparency, algorithms assess organizational authority before they evaluate any other ranking factor. This initial evaluation layer determines whether an organization qualifies for inclusion in generated responses. Industry analysts note that authority operates as the foundational principle in AI search rather than a secondary ranking factor. Organizations must establish themselves as definitive experts in their specific operational areas.

When organizations publish original research and primary data, they create strong content authority signals that algorithms recognize. General observations don't carry the same weight as firsthand impact reports. Organizations must ensure their teams produce specialized knowledge that other reputable entities cite and reference. This practice is indispensable for long-term visibility. Once AI search engines verify an organization's authority, they confidently distribute that organization's insights to curious users.

Establishment of Content Quality Standards

After AI search engines verify this authority and distribute insights, nonprofit publications must demonstrate lived expertise to satisfy content quality standards. Machine learning models scan documents for depth and nuance rather than keyword density. Systems reward thorough information because content with strong Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) demonstrates lived expertise, and models prioritize this over technical optimization tricks. Organizations should not publish shallow blog posts. They need to release detailed analyses of their field operations.

The creation of these detailed resources requires a rigorous editorial process. Authors must back their claims with verifiable data and document their methodologies. These practices generate strong authority markers that prove an organization's competence. If a global water charity builds new wells, its articles must detail the geological challenges and engineering solutions. This level of depth proves to the algorithm that the organization possesses genuine, firsthand knowledge.

Measurement of Platform Trust

While firsthand knowledge proves depth to the algorithm, generative models also evaluate the technical infrastructure and external corroboration of the source platform. They analyze how an organization manages its website security, user experience, and technical performance. Algorithmic models apply multi-dimensional trust signals that include source authority, content quality, corroboration, infrastructure, and citation graphs. A poorly maintained website signals operational instability to intelligent systems.

Platform trust also relies heavily on external validation. Systems check whether other reputable domains link to the organization's domain and validate its claims. Organizations must build transparent relationships with academic institutions, government agencies, and respected media outlets. These external validations reinforce the credibility signals that the organization's own publications generate. When multiple trustworthy platforms corroborate a charity's impact data, generative algorithms confidently present that charity as a reliable source.

Distributed Validation Over Centralized PR

Even though external platforms corroborate a charity's impact data, centralized press releases fail to influence generative models because algorithms treat syndicated promotional content as filler. In the past, organizations blasted identical press releases to hundreds of news wires and expected an immediate boost in visibility. Today, this centralized approach wastes resources and ignores how machines evaluate external validation. Generative models look for organic conversations, independent reviews, and diverse media mentions across the internet.

AI Systems Reward Independent Mentions

Organizations must shift their attention toward niche forums, specialized regional media, and video platforms. Recent data shows that YouTube has overtaken Reddit as the most frequently cited social platform in generated responses. This shift means that a well-documented video that explains a community project carries more algorithmic weight than a generic national press release. Working with a specialized agency helps organizations navigate this fragmented media environment.

Distributed validation occurs when organizations implement strategic communication practices that machines can verify:

-

They discuss operational challenges on specialized Reddit communities.

-

They publish detailed video documentaries about beneficiary outcomes on YouTube.

-

They secure feature articles in local newspapers where the charity operates.

-

They answer complex questions on Quora with verified organizational accounts.

-

They earn mentions in academic journals that relate to the organization's mission.

These deliberate actions build a web of third-party citations. When intelligent algorithms detect that an organization actively participates in these diverse platforms, they increase their trust in that organization's authority.

Structural Imperative for Narrative Stories

Intelligent algorithms increase their trust in an organization's authority through third-party citations, but they cannot process the emotion in human storytelling that connects with donors. Algorithms require clear structural hierarchies to parse, categorize, and extract information. Organizations must balance the emotional resonance of their mission with the strict structural requirements of machine extraction. For instance, when an animal rescue organization tells a heart-wrenching story about a rescued dog, generative models ignore it if the page lacks clear formatting.

Structure the Story for Both Humans and AI

Authors must use a precise hierarchy to organize their narratives. They should front-load direct answers at the beginning of the article and expand into the emotional narrative further down the page. This approach satisfies the algorithm's need for immediate facts while it preserves the emotional journey for the human reader. Writers use descriptive headers to break the narrative into logical sections that machines can easily index.

Technical formatting plays an equally important role in narrative visibility. Organizations need to implement structured code behind their stories to translate human language into machine-readable data. Proper schema markup implementation increases AI citation probability by 2.5 times. This code tells the algorithm exactly what the page discusses, who authored it, and what entities it references. When organizations combine emotional storytelling with structured data, they create powerful assets that satisfy both human supporters and intelligent systems.

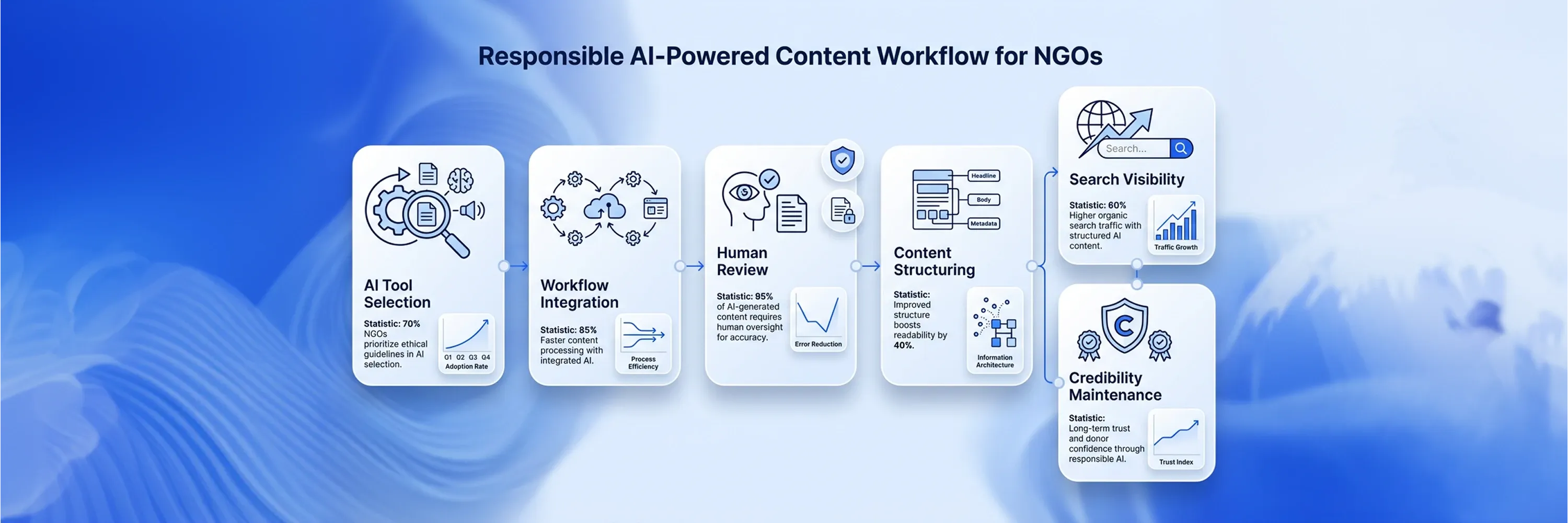

Workflows in AI Content Marketing

To satisfy intelligent systems with these powerful assets, organizations must make significant operational changes to produce machine-readable resources at scale. Teams can't rely on random publishing schedules or isolated communication efforts. They must establish systemic workflows that align every piece of published information with the organization's core mission. This integration forms the backbone of a complete digital strategy. Every department must coordinate to ensure the organization presents a unified front to intelligent systems.

Consistency Shapes How AI Understands an Organization

Message consistency plays a vital role in how algorithms process organizational data. When different departments publish conflicting information about the charity's operations, they confuse generative models. These models look for repeated, verifiable facts to formulate their responses. Industry research demonstrates that consistency across messaging reduces output inaccuracy by an estimated 30% to 40%. Discrepancies in impact numbers or mission statements prevent the algorithm from trusting the organization as a definitive source.

To maintain this consistency, organizations must unify their AI and content marketing initiatives. They must create centralized databases of approved facts, figures, and brand narratives that all content creators use. This cohesive approach ensures that every new article, video, and social media post reinforces the same core facts. The consistent publication of accurate data strengthens content authority signals over time. The algorithm learns to trust the organization because the organization constantly delivers reliable and structured information.

Shift in Measurement Attribution

As the algorithm learns to trust the organization through reliable information, generative response models force organizations to abandon traditional traffic-centric metrics. For years, organizations measured success when they counted page views and unique website visitors. Because generative models provide answers directly on the results page, website traffic naturally declines. This decline doesn't mean the organization has lost visibility. It simply means that discovery happens elsewhere. Early-stage donor discovery increasingly happens inside AI platforms rather than on nonprofit websites.

Organizations must shift their focus to actionable measurements that reflect real-world impact. They need to track volunteer signups, donation volume, and direct inquiries instead of generic website clicks. The tracking of these specific actions provides definitive proof that the digital strategy works. A successful AI and content marketing campaign will drive high-intent users who already learned about the organization through an intelligent system's response.

Audit How AI Platforms Represent the Organization

To track performance accurately, organizations must implement routine visibility audits to monitor how intelligent platforms perceive their brand. These audits help organizations evaluate their share of voice across different models.

Organizations execute these audits when they follow a structured evaluation process:

-

They identify the core questions potential donors ask about the organization's mission.

-

They enter these specific queries into major generative platforms to observe the responses.

-

They document which third-party websites the intelligent systems cite as sources.

-

They analyze the sentiment and accuracy of the generated summaries about the charity.

-

They adjust publishing workflows to address any gaps in the algorithmic knowledge base.

These steps allow organizations to map exactly where they stand in the modern discovery ecosystem.

Structural Imperative for Narratives

Organizations map their position in the modern discovery ecosystem and realize that machines process human stories differently than donors do. Algorithms parse text through structure to extract information instead of feeling empathy. Intelligent systems ignore the text if an animal rescue organization writes an emotional narrative about a rescued dog but fails to implement technical hierarchies. Machines lack emotions and rely on code and headings to determine the article's topic. Therefore, content creators must apply a structured approach to every published story so machines can read it.

Organize Narratives Around a Clear Hierarchy

Authors use a precise hierarchy to organize their narratives and satisfy human readers and algorithmic crawlers. They place direct answers and factual summaries at the top of the article. Authors present the facts first and then expand into the emotional narrative further down the page. This technique gives the algorithm immediate access to the necessary data and preserves the emotional journey for human supporters. Writers also use descriptive headers to break the text into distinct sections that machines parse easily.

Technical formatting translates human language into machine-readable data. Developers implement structured data code behind the visible text. This invisible code tells the algorithm exactly who authored the page, what entities the text references, and what specific facts the story contains. Research shows that proper schema markup implementation increases AI citation probability by 2.5 times. Organizations reach wider audiences through generative responses when they combine emotional stories with this technical foundation.

AI and Content Marketing Workflows

To combine emotional stories with this technical foundation, organizations must rethink how they operate to produce machine-readable resources. Departments often publish information separately, and that fragments the digital presence. Teams must establish systemic workflows that govern every piece of published information to fix this issue. This integration sits at the center of an effective NGO digital marketing strategy. The organization presents a unified front that intelligent systems understand when every department coordinates its publication efforts.

Algorithms build their knowledge base when they find repeated, verifiable facts across different platforms. For example, the algorithm cannot determine the correct facts if a charity's fundraising team publishes one set of impact numbers and the program team publishes different numbers. Consequently, the model drops the organization as a source. Industry data demonstrates that consistency across messaging and brand descriptions reduces AI output inaccuracy by 30% to 40%. Consistent facts teach the machine exactly what the organization does.

Build a Central Source of Truth

Organizations establish cohesive databases that contain approved facts, mission descriptions, and impact metrics. Content creators pull from these central repositories whenever they draft a new article or report. The organization generates strong content authority signals across the internet because every piece of content uses the exact same data points. The algorithm constantly encounters identical facts connected to the organization, and it eventually recognizes the organization as the definitive source for that specific topic. This AI and content marketing approach builds algorithmic trust over time.

Measurement and Attribution Models

Because this AI and content marketing approach builds algorithmic trust over time, generative response models require a new approach to measure success. Organizations tracked page views and unique website visitors to gauge their digital reach for decades. Website traffic naturally declines because intelligent systems provide answers directly on the results page. This decline doesn't mean the organization lost its audience. Research indicates that early-stage donor discovery increasingly happens inside AI platforms rather than on nonprofit websites. Supporters learn about the mission through algorithmic summaries before they decide to visit the organization's domain.

Organizations track actionable metrics that reflect impact. They evaluate donation volume, volunteer signups, and direct inquiries. These metrics provide proof that an AI and content marketing strategy reaches interested supporters. Users arrive with high intent and a strong readiness to participate when they finally click through from AI search engines.

How Visibility Audits Work

Organizations monitor their performance through routine visibility audits. These audits help teams evaluate their share of voice across different generative models.

Teams execute these audits through a structured process:

-

Identify the questions potential donors ask about the organization.

-

Enter these specific queries into major generative platforms.

-

Document which third-party websites the intelligent systems cite.

-

Analyze the accuracy of the generated summaries about the charity.

-

Adjust publication schedules to address gaps in the algorithmic knowledge base.

These steps allow organizations to map exactly where they stand in the modern discovery ecosystem.

Conclusion

To summarize, organizations map exactly where they stand in the modern discovery ecosystem, and they safeguard their long-term survival and mission impact when they secure an early advantage in the modern digital landscape. These organizations shift their perspective from ranking high to winning the answer because traditional keyword optimization provides fewer results. They prioritize structured data and decentralized trust building to ensure their initiatives remain visible to important supporters.

The integration of ai and content marketing establishes a strong foundation for future discovery. Organizations take the next step by initiating a thorough visibility audit to evaluate their current standing. This audit helps them adapt quickly and establish themselves as valuable resources within the AI search visibility ecosystem.