Introduction

Generative artificial intelligence changed how people search for and consume information online. According to industry observations, Semrush data suggests LLM traffic will surpass traditional organic search traffic by 2028, and this behavioral shift disrupts established digital marketing strategies. The rapid spread of these technologies provides organizations with new efficiencies, but a lack of strategic direction leaves many institutions vulnerable to publishing inauthentic messaging. When teams implement AI tools for content creation without clear guidelines, they risk alienating their core supporters and losing their digital authority.

This article serves as a strategic roadmap to help organizations gain visibility in emerging conversational search engines and protect organizational credibility. Understanding how these models evaluate and cite information helps organizations transition from traditional search practices to generative engine optimization.

Shift to Generative Engine Optimization

Organizations transition to generative engine optimization because modern digital landscapes operate differently than traditional Search Engine Optimization (SEO) strategies that focused on blue links. Conversational search engines read, synthesize, and answer user questions directly on the results page. This shift makes Generative Engine Optimization (GEO) necessary for published digital materials. Industry experts define GEO as the practice of structuring content so AI-powered search platforms recommend specific brands in their generated answers.

How Content Structure Improves Visibility in AI Search

Precision formats help writers when they use AI tools for content creation. If a website structures its pages logically, artificial intelligence models extract that information easily. Complex formatting confuses language models and prevents them from reading the text properly. A verified citation analysis revealed that 44.2% of ChatGPT citations came from the top third of the web page. This data shows that early core messages improve visibility for the entire article. Furthermore, updated search practices for modern algorithms ensure the mission reaches the right audience. As ai content platforms evolve, these systems prioritize websites that deliver direct answers without filler. Publishing workflows adapt to meet these new algorithmic requirements.

Evaluation of AI tools for content creation

Organizations select software carefully to adapt their publishing workflows to these new algorithmic requirements, and the right software dictates the success of a digital communications strategy. Many users encounter issues because they treat all these systems as identical applications. However, different language models serve distinct needs and handle specific scenarios differently. A single application cannot write long-form reports, analyze data, and generate social media posts simultaneously. Each platform brings unique capabilities that require specific prompting techniques and human oversight.

Matching AI Tools to Specific Content Workflows

System evaluation pairs daily workflows with the appropriate technology. Users who understand the technical strengths of each model produce materials that maintain high quality across all communication channels. This strategic match provides confidence to delegate repetitive administrative tasks to software programs. Instead of generic software lists, careful analysis shows how specific programs integrate into existing approval processes. This careful evaluation ensures that technology supports the mission rather than complicating it. The right tool for the specific writing task saves hours of frustration.

ChatGPT as Strategic Multi-Tool

ChatGPT serves as one of these specific tools, and OpenAI developed it to function as a versatile assistant for daily administrative tasks. Professionals often structure raw data, summarize meeting notes, and draft email responses to save hours. ChatGPT handles these routine assignments efficiently, and this frees employees to focus on complex campaign strategies. The platform processes large volumes of text quickly. It organizes messy survey results into readable tables and formats rough ideas into coherent outlines.

Because the model adapts to various prompt structures, many users treat it as the foundation for their digital workflows. These nonprofit ai tools establish a baseline of productivity when integrated into daily operations. Users build trust in the technology when they see how it accelerates the drafting process without replacing their editorial judgment.

Claude for Nuanced Brand Tone Among Nonprofit AI tools

While ChatGPT accelerates general drafting tasks, Anthropic designed Claude with specific guardrails that benefit users who handle sensitive subjects. This model preserves authentic brand voice during long-form writing tasks. Grant proposals and impact reports require software that maintains a professional and measured tone. Claude analyzes existing brand guidelines and replicates that specific voice across multiple documents.

The model demonstrates remarkable soundness when it discusses complex social issues. It avoids exaggerated claims that could damage a reputation. Research shows that intellectual honesty gives content a 1.7x citation boost in Claude. Writers who use these ai content platforms to draft policy papers often find that the software handles delicate terminology carefully. This cautious approach prevents embarrassing messaging errors.

Perplexity for Verifiable Research

Organizations also prevent messaging errors when they use Perplexity, and this platform operates as a research engine that prioritizes factual accuracy over creative writing. The platform actively searches the internet and cites authoritative sources for every claim it generates. This focus on attribution makes the software valuable for fact checks and policy statistics verification. Users do not guess where the information originated because the system provides footnote links to the original articles.

Different models evaluate credibility differently. Recent studies indicate that models favor specific source types. For example, Gemini prefers official websites, and Claude prefers user-generated content. Perplexity builds conviction because it shows its work. When policy researchers use these systems, they gather background information from government databases and academic journals quickly. The platform streamlines the research phase of content creation.

Authenticity Challenge with Trust Signals

Even though platforms streamline the research phase of content creation, the rapid adoption of ai tools for content creation raises valid concerns that organizations might lose human connection. Communities support initiatives because they believe in the people behind the mission. When automation handles communications entirely, the resulting robotic tone alienates the core audience. Generative models cannot replicate genuine field experience or emotional intelligence.

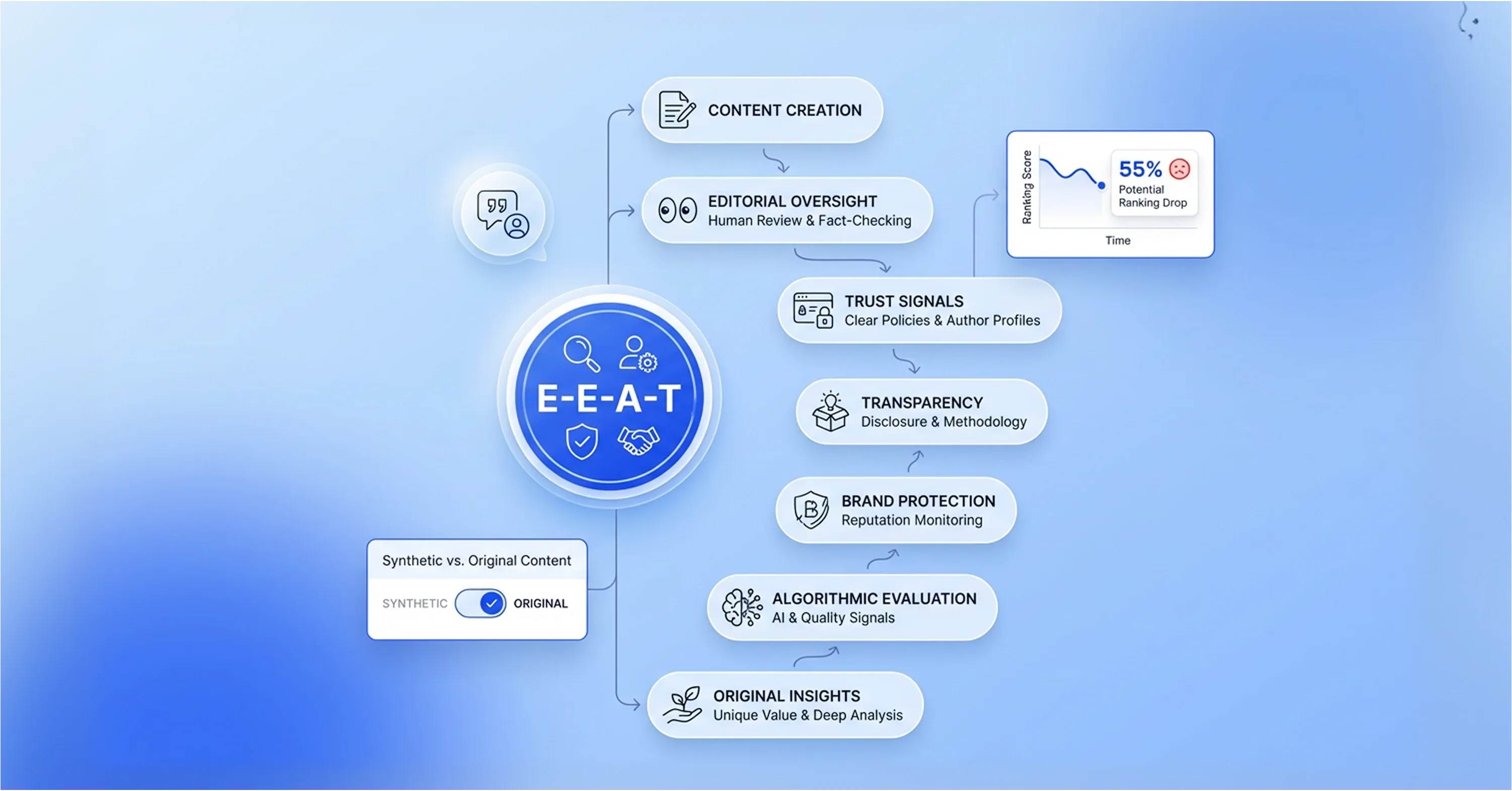

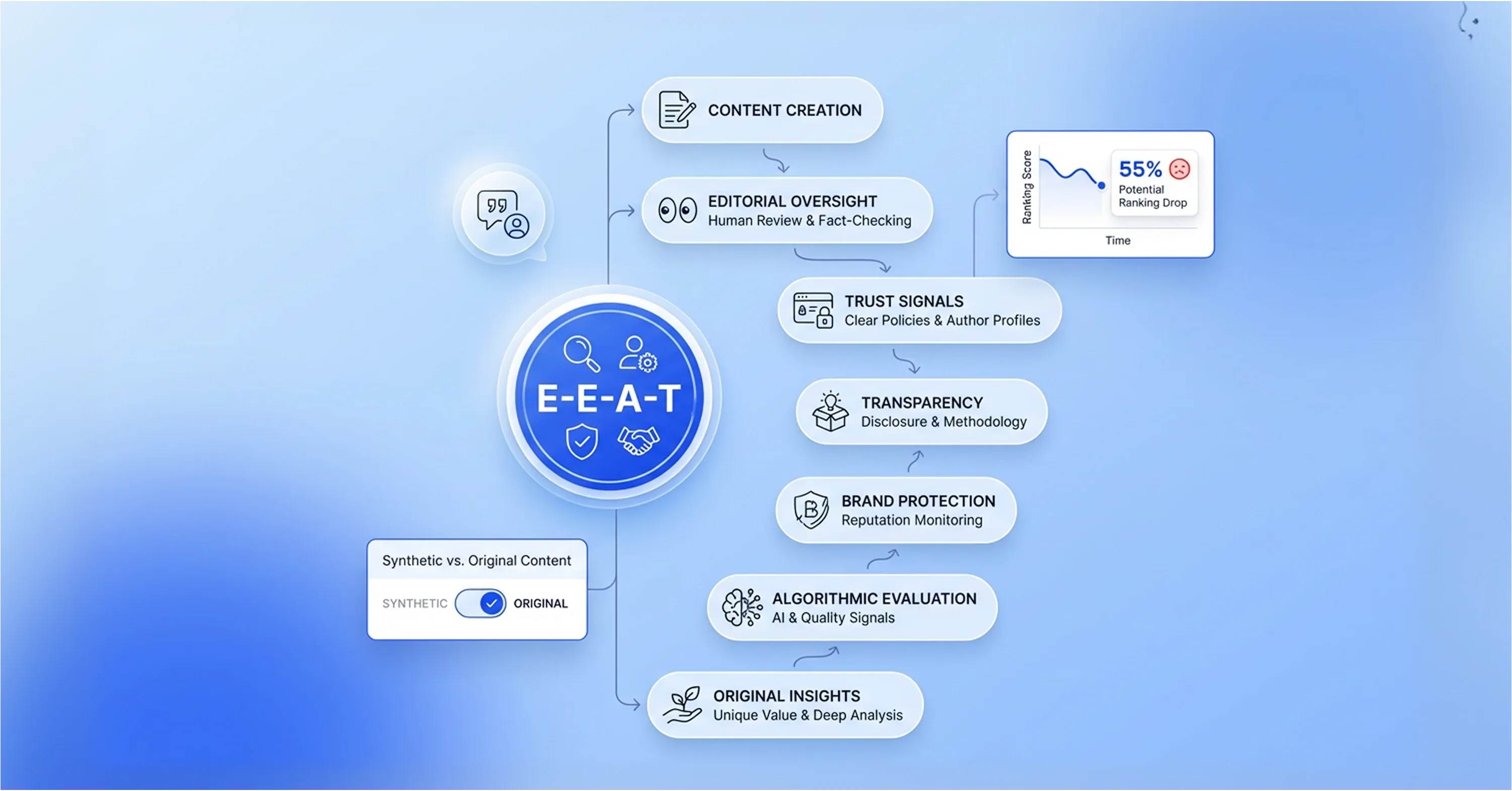

Building Trust Signals and Editorial Integrity in AI Content

Publishers build Experience, Expertise, Authoritativeness, and Trust (E-E-A-T) into every published piece to overcome this challenge. Search algorithms look for these human elements when they evaluate web pages. Search engine engineers confirm that E-E-A-T content demonstrates expertise, authoritativeness, and trust, which AI engines prioritize in citations. A well-written strategy for digital content creation centers around real-world impact data. If a site relies solely on synthetic text, algorithms rank its pages lower than sites that feature original human insights.

Strict editorial oversight over all published media ensures brand protection. Safe integration of these systems follows specific guidelines:

-

Audits compare all machine-generated text against established data.

-

Case studies from recent field operations replace synthetic examples.

-

Clear labels on synthetic images maintain transparency with the public.

-

Original expert quotes appear alongside automated research summaries.

Visual materials present reputational challenges. Audiences seek comfort in stories that reflect reality. A recent survey revealed that 55% of respondents would feel discouraged from giving if AI-generated images lacked verification.

Cost-Effective Integration Methods

These strict editorial safeguards require resources, and many businesses hesitate to upgrade from free software tiers because they worry about the financial return on investment. These businesses often use free versions to manage tight budgets, but they gain data privacy and advanced features through paid subscriptions. Upgrading to an enterprise account protects sensitive client information from public language models.

ROI and Efficiency Gains from Paid AI Content Tools

Teams see this strategic investment pay off quickly when they measure their productivity gains. A recent study shows that professionals completed tasks 25% faster and produced 40% higher quality work when they used advanced generative models. Staff members use these performance improvements to draft business proposals and company newsletters in half the usual time. Financial data supports this technological shift. Standard content marketing delivers three dollars for every one dollar invested, but organizations that use AI-powered strategies achieve a 748% ROI.

How AI Content Tools Improve Marketing Efficiency

When marketing teams integrate paid AI content platforms into their operations, they do not need to increase their headcount to produce more materials. These teams use this efficiency to execute broader search engine marketing strategies that attract more customers to the business. Marketing departments view the initial software costs as minor compared to the significant increase in digital output and search visibility. Paid software turns a standard marketing department into a highly efficient publishing operation that consistently meets its campaign goals.

Reputational Risk Mitigation

Even a highly efficient publishing operation faces dangers, and companies damage their credibility overnight when they publish unverified automated text. Language models often invent facts or misrepresent complex industry issues, and audiences notice these unnatural phrasing errors quickly. Companies face years of difficult work to rebuild the relationship after an audience loses trust in their digital messaging. Editorial managers must review every generated paragraph with certainty before publishing any marketing materials.

Industry data reveals a lack of governance across the sector despite these reputational risks. Recent statistics show that 76% of organizations operate without an artificial intelligence policy, and this oversight exposes them to compliance vulnerabilities. Editorial managers must establish strict editorial rules for all AI tools for content creation to protect their brand integrity.

Governance Strategies for Maintaining Content Accuracy and Trust

Organizations must move beyond awareness of risks and actively implement structured processes that ensure accuracy, consistency, and accountability in every piece of published content. Without clear governance systems, even well-intentioned teams can introduce errors that damage credibility. A disciplined editorial framework allows companies to scale content production while maintaining full control over quality and brand integrity.

Teams must implement specific governance measures to guide their daily operations and maintain precision in their public messaging:

-

Source verification: Editors must cross-reference all machine-generated statistics against official company reports.

-

Brand alignment: Staff members must rewrite automated text to match the unique organizational voice.

-

Data verification: Subject matter experts must review complex policy statements to prevent algorithmic hallucinations.

Organizations use these safeguards to produce accurate search engine visibility materials that both algorithms and human readers trust. A documented governance policy prevents factual errors and ensures that technology serves the business safely.

Human-in-the-Loop Implementation Workflows

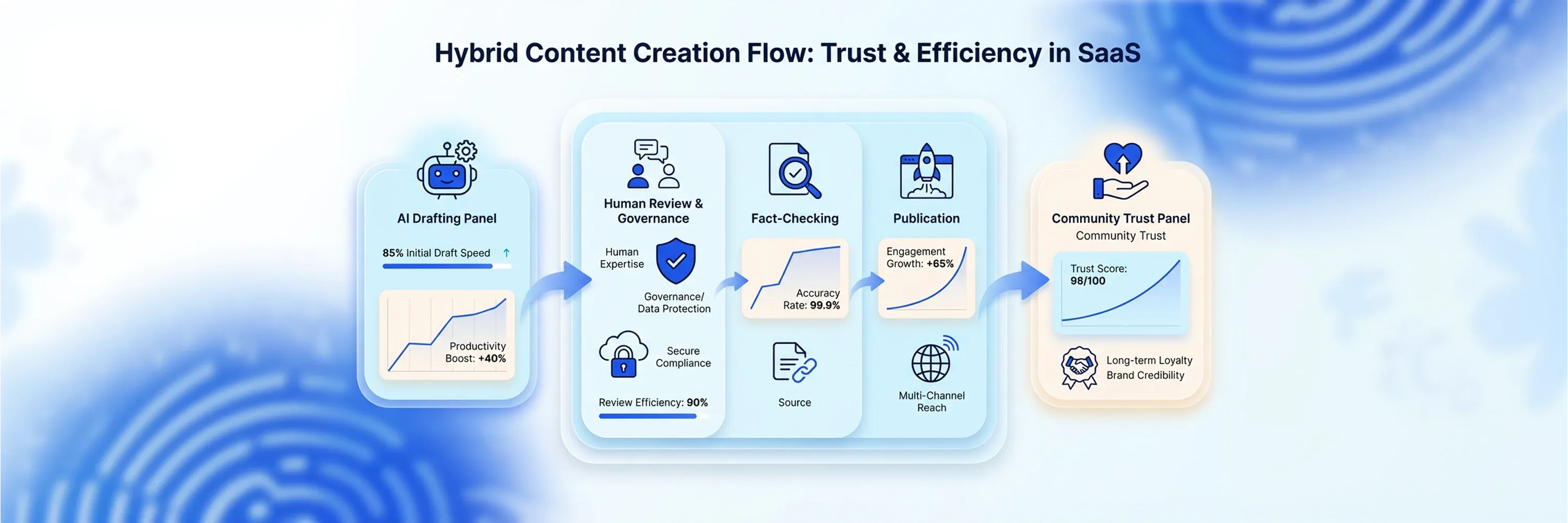

A documented governance policy translates into a structured editorial workflow that combines machine efficiency with strict human oversight. Many professionals experiment with generative technology, including nonprofit AI tools, but few departments formalize their daily operations. Staff members use artificial intelligence individually at a rate of 81%, but only 4% of organizations possess documented workflows. Companies experience inconsistent messaging and compromised data security because of this operational disconnect.

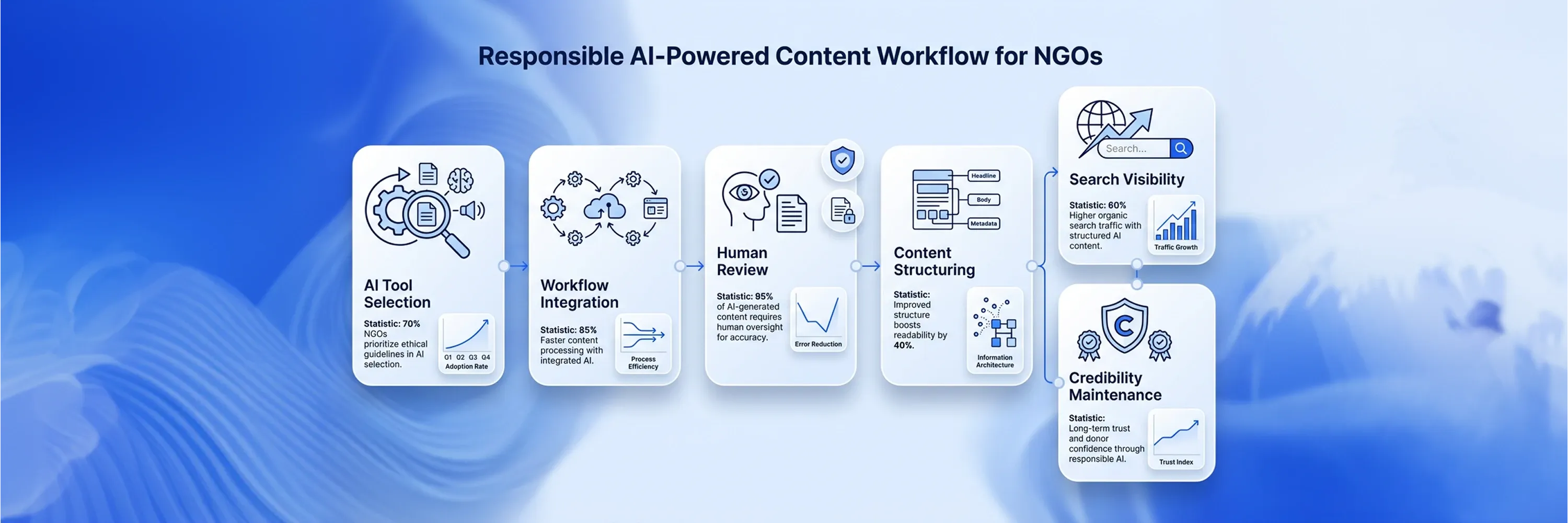

Implementing a Human-in-the-Loop Content Workflow

Marketing departments adopt a human-in-the-loop approach to solve this problem effectively. Employees begin the process when they write a detailed prompt that strictly excludes all sensitive client information or proprietary financial data. The language model then generates a rough outline or a preliminary draft based solely on that secure prompt. Industry experts confirm that efficiency improves and authenticity remains intact when staff members use software to draft first versions and conduct mandatory human reviews.

A human writer takes full control of the document after the machine produces the initial text. The writer injects real-world case studies, refines the emotional tone, and verifies all factual claims against internal records. A senior editor then reviews the piece to guarantee high quality before anyone approves the final publication. Teams rely on this structured collaborative sequence to publish digital materials frequently, and this process protects their ethical standards and customer trust.

Conclusion

Because this structured collaborative sequence protects customer trust, organizations improve their impact when they balance algorithmic optimization and authentic human connection. This balance ensures that their digital presence remains strong. Genuine human impact and community trust remain the strongest ranking factors even as search engines evolve in the future. Teams view ai tools for content creation as collaborative assistants rather than wholesale replacements, and this approach helps them scale operations and keep their core values. As a next step, institutions audit their current workflows for efficiency and authenticity, and they establish a clear AI Content Generator framework to deliver machine-readable data and preserve the emotional resonance that drives their mission forward.